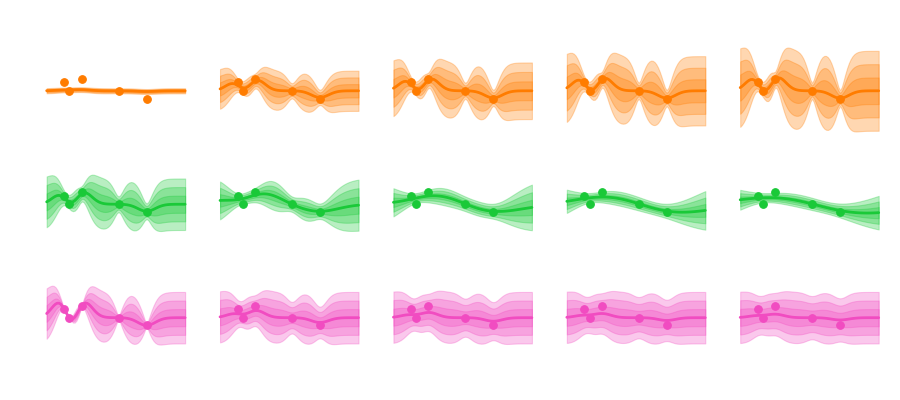

This has been a little side proof-of-concept project derived from our big ICLR publication. Turns out learning visual representations in a self-supervised manner with a temporal coherence loss can explain the phenomenon of color constancy.

[1] M. R. Ernst, F. M. López, A. Aubret, R. W. Fleming and J. Triesch, “Self-Supervised Learning of Color Constancy,” 2024 IEEE International Conference on Development and Learning (ICDL), Austin, TX, USA, 2024, pp. 1-7, doi: 10.1109/ICDL61372.2024.10644375.